More Than a Model: The Compounding Impact of Behavioral Ambiguity and Task Complexity on Hate Speech Detection

DOI:

https://doi.org/10.35566/jbds/xu2025Keywords:

Hate Speech Detection, Behavioral Data Science, Label Ambiguity, Transformer Models, Text ClassificationAbstract

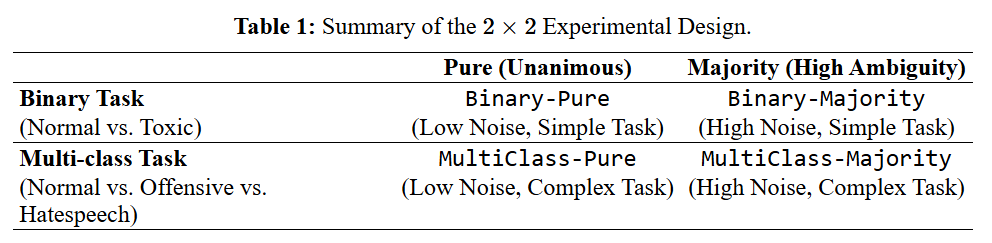

The automated detection of hate speech is a critical but difficult task due to its subjective, behavior-driven nature, which leads to frequent annotator disagreement. While advanced models (e.g., transformers) are state-of-the-art, it is unclear how their performance is affected by the methodological choice of label aggregation (e.g., majority vote vs. unanimous agreement) and task complexity. We conduct a 2x2 quasi-experimental study to measure the compounding impact of these two factors: Labeling Strategy (low-ambiguity ``Pure'' data vs. high-ambiguity ``Majority'' data) and Task Granularity (Binary vs. Multi-class). We evaluate five models (Logistic Regression, Random Forest, Light Gradient Boosting Machine [LightGBM], Gated Recurrent Unit [GRU], and A Lite BERT [ALBERT]) across four quadrants derived from the HateXplain dataset. We find that (1) ALBERT is the top-performing model in all conditions, achieving its peak F1-Score (0.8165) on the ``Pure'' multi-class task. (2) Label ambiguity is strongly associated with performance loss; ALBERT's F1-Score drops by $\approx$15.6\% (from 0.8165 to 0.6894) when trained on the higher-disagreement ``Majority'' data in the multi-class setting. (3) This negative effect is compounded by task complexity, with the performance drop being nearly twice as severe for the multi-class task as for the binary task. A sensitivity analysis confirmed this drop is not an artifact of sample size. We conclude that in HateXplain, behavioral label ambiguity is a more significant bottleneck to model performance than model architecture, providing strong evidence for a data-centric approach.

References

Alghamdi, J., Lin, Y., & Luo, S. (2023). Towards covid-19 fake news detection using transformer-based models. Knowledge-Based Systems, 274, 110642. doi: https://doi.org/10.1016/j.knosys.2023.110642

Breiman, L. (2001). Random forests. Machine Learning, 45, 5–32. doi: https://doi.org/10.1023/A:1010933404324

Cao, Y., Dai, J., Wang, Z., Zhang, Y., Shen, X., Liu, Y., & Tian, Y. (2025). Machine learning approaches for depression detection on social media: A systematic review of biases and methodological challenges. Journal of Behavioral Data Science, 5(1). doi: https://doi.org/10.35566/jbds/caoyc

Cho, K., van Merrienboer, B., Gulcehre, C., Bahdanau, D., Bougares, F., Schwenk, H., & Bengio, Y. (2014). Learning phrase representations using RNN encoder–decoder for statistical machine translation. In Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP) (pp. 1724–1734). Doha, Qatar: Association for Computational Linguistics. doi: https://doi.org/10.3115/v1/D14-1179

Davidson, T., Warmsley, D., Macy, M., & Weber, I. (2017). Automated hate speech detection and the problem of offensive language. In Proceedings of the international aaai conference on web and social media (icwsm) (Vol. 11, pp. 512–515). doi: https://doi.org/10.1609/icwsm.v11i1.14955

Ding, Z., Wang, Z., Zhang, Y., Cao, Y., Liu, Y., Shen, X., … Dai, J. (2025). Trade-offs between machine learning and deep learning for mental illness detection on social media. Scientific Reports, 15, 14497. doi: https://doi.org/10.1038/s41598-025-99167-6

Friedman, J. H. (2001). Greedy function approximation: A gradient boosting machine. Annals of Statistics, 29(5), 1189–1232. doi: https://doi.org/10.1214/aos/1013203451

Ge, J. (2024). Technologies in peace and conflict: Unraveling the politics of deployment. International Journal of Research Publication and Reviews (IJRPR), 5(5), 5966–5971. doi: https://doi.org/10.55248/gengpi.5.0524.1273

Hosmer, D. W., & Lemeshow, S. (2000). Applied logistic regression (2nd ed.). New York, NY: John Wiley & Sons.

Ke, G., Meng, Q., Finley, T., Wang, T., Chen, W., Ma, W., … Liu, T.-Y. (2017). Lightgbm: A highly efficient gradient boosting decision tree. In Proceedings of the 31st international conference on neural information processing systems (neurips 2017) (pp. 3149–3157). Red Hook, NY, USA: Curran Associates, Inc. doi: https://doi.org/10.5555/3294996.3295074

Lan, G., Inan, H. A., Abdelnabi, S., Kulkarni, J., Wutschitz, L., Shokri, R., … Sim, R. (2025). Contextual integrity in llms via reasoning and reinforcement learning. doi: https://doi.org/10.48550/arXiv.2506.04245

Lan, G., Zhang, S., Wang, T., Zhang, Y., Zhang, D., Wei, X., … Brinton, C. G. (2025). Mappo: Maximum a posteriori preference optimization with prior knowledge. doi: https://doi.org/10.48550/arXiv.2507.21183

Lan, Z., Chen, M., Goodman, S., Gimpel, K., Sharma, P., & Soricut, R. (2020). Albert: A lite bert for self-supervised learning of language representations. doi: https://doi.org/10.48550/arXiv.1909.11942

Malik, J. S., Qiao, H., Pang, G., & van den Hengel, A. (2025). Deep learning for hate speech detection: a comparative study. International Journal of Data Science and Analytics, 20, 3055–3068. doi: https://doi.org/10.1007/s41060-024-00650-6

Mansur, Z., Omar, N., & Tiun, S. (2023). Twitter hate speech detection: A systematic review of methods, taxonomy analysis, challenges, and opportunities. IEEE Access, 11, 16226–16249. doi: https://doi.org/10.1109/ACCESS.2023.3239375

Mathew, B., Saha, P., Yimam, S. M., Biemann, C., Goyal, P., & Mukherjee, A. (2021). Hatexplain: A benchmark dataset for explainable hate speech detection. In The thirty-fifth aaai conference on artificial intelligence (aaai-21) (pp. 14867–14875). doi: https://doi.org/10.1609/aaai.v35i17.17745

Rao Killi, C. B., Balakrishnan, N., & Rao, C. S. (2024). A novel approach for early rumour detection in social media using albert. International Journal of Intelligent Systems and Applications in Engineering, 12(3), 259–265. Retrieved from https://ijisae.org/index.php/IJISAE/article/view/5248

Shah, S., & Patel, S. (2025). A comprehensive survey on fake news detection using machine learning. Journal of Computer Science, 21(4), 982–990. doi: https://doi.org/10.3844/jcssp.2025.982.990

Tanvir, A. A., Mahir, E. M., Akhter, S., & Huq, M. R. (2019). Detecting fake news using machine learning and deep learning algorithms. In 2019 7th international conference on smart computing & communications (icscc) (pp. 1–5). doi: https://doi.org/10.1109/ICSCC.2019.8843612

Tian, Y., Xu, S., Cao, Y., Wang, Z., & Wei, Z. (2025). An empirical comparison of machine learning and deep learning models for automated fake news detection. Mathematics, 13(13), 2086. doi: https://doi.org/10.3390/math13132086

Xu, S., Ding, Z., Wei, Z., Yang, C., Li, Y., Chen, X., & Wang, H. (2025). A comparative analysis of deep learning and machine learning approaches for spam identification on telegram. In 2025 6th international conference on computer communication and network security.

Zhang, Y., Wang, Z., Ding, Z., Tian, Y., Dai, J., Shen, X., … Cao, Y. (2025). Employing machine learning and deep learning models for mental illness detection. Computation, 13(8), 186. doi: https://doi.org/10.3390/computation13080186